pinyin splitter (Chinese romanization system)

When we enter pinyin and keywords in many websites, the auto-completion function will appear to help users search for the results they want.

Elasticsearch can help us accomplish this. If you want to use pinyin to search for products, you need to use pinyin segmentation, and if you want to do a complement function based on letters, you need to segment documents according to pinyin.

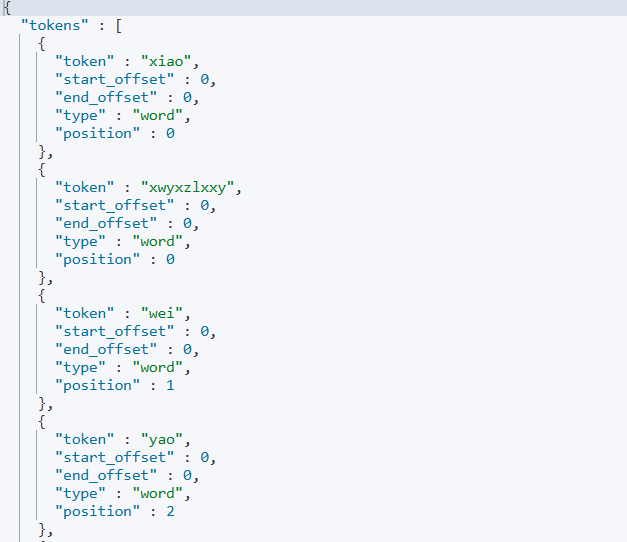

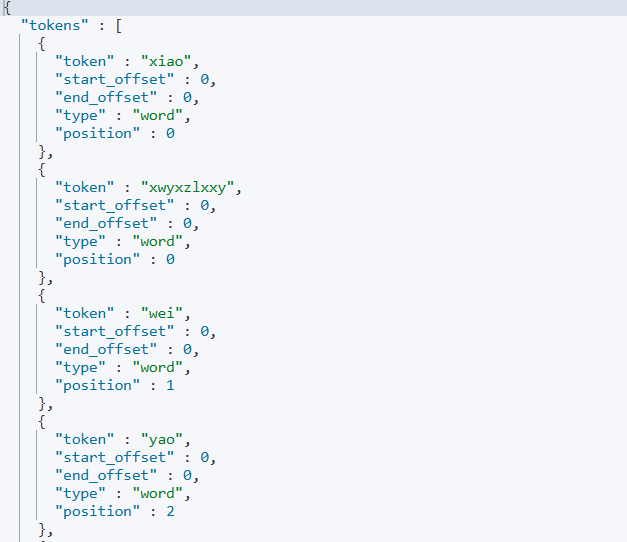

First we need to install the pinyin participle plugin, download the pinyin participle plugin to the es-plugins directory of the mount. After the download is completed, we can test whether it works or not, the DSL code is as follows:

POST /_analyze

{

"text": ["Wee Willie needs to learn from the guys"],

"analyzer": "pinyin"

}

Customized Splitters

Above we demonstrated using pinyin as a participle, but the above is based on single and combined pinyin. In production environments, we often need to use pinyin and Chinese words together, which requires us to customize the splitter.

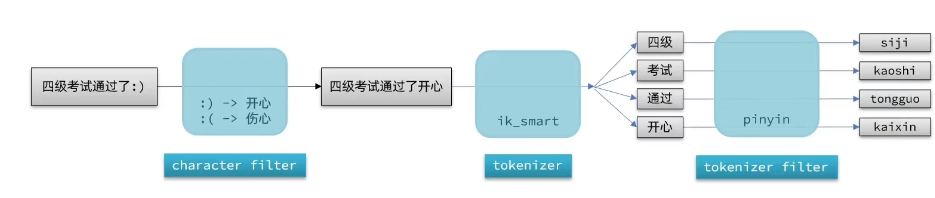

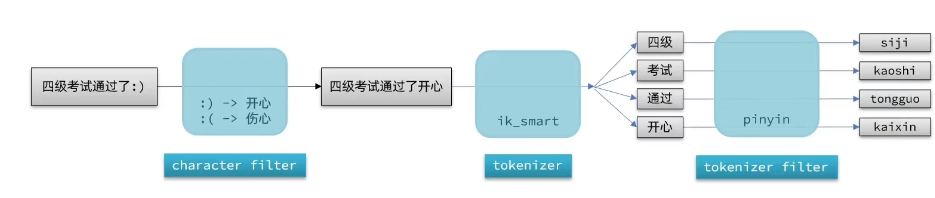

The composition of the analyzer in

elasticsearch consists of three parts:

- character filters: process the text before the tokenizer. For example, removing characters, replacing characters

- tokenizer: cut the text into words (term) according to certain rules. For example, keyword, which is not split into words; and ik_smart

- tokenizer filter: do further processing on the output words of tokenizer. For example, case conversion, synonym processing, pinyin processing, etc.

We can configure a customized analyzer via settings when creating the index library:

// Customized Pinyin Splitter

PUT /test

{

"settings": {

"analysis": {

"analyzer": {

"my_analyzer": {

"tokenizer": "ik_max_word",

"filter": "py"

}

},

"filter": {// Custom tokenizer filter

"py": { //filter name

"type": "pinyin", //filter type is pinyin

"keep_full_pinyin": false,

"keep_joined_full_pinyin": true,

"keep_original": true,

"limit_first_letter_length": 16,

"remove_duplicated_term": true,

"none_chinese_pinyin_tokenize": false

}

}

}

},

"mappings": {

"properties": {

"name": {

"type": "text"

"analyzer": "my_analyzer" //Split words using a customized splitter

, "search_analyzer": "ik_smart" //search using ik splitter

}

}

}

}

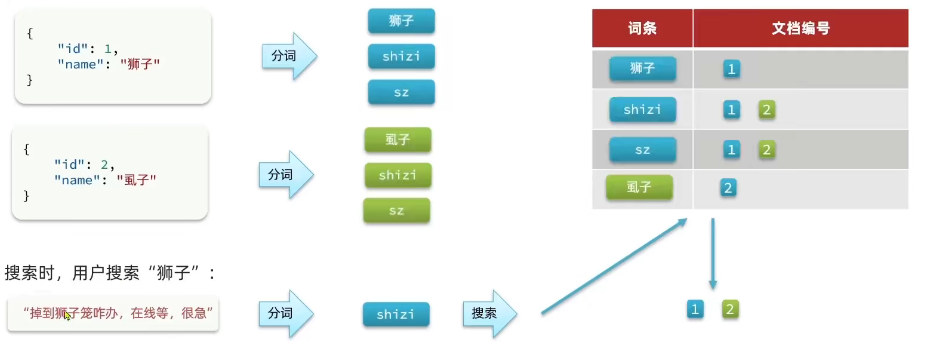

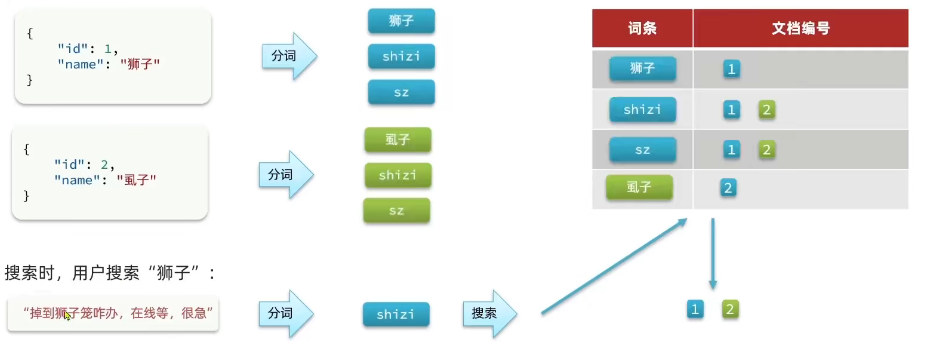

Place two documents in the index library created above:

{

"id": 1,

"name": "Lion"

}

POST /test/_doc/2

{

"id": 2,

"name": "Tick"

}

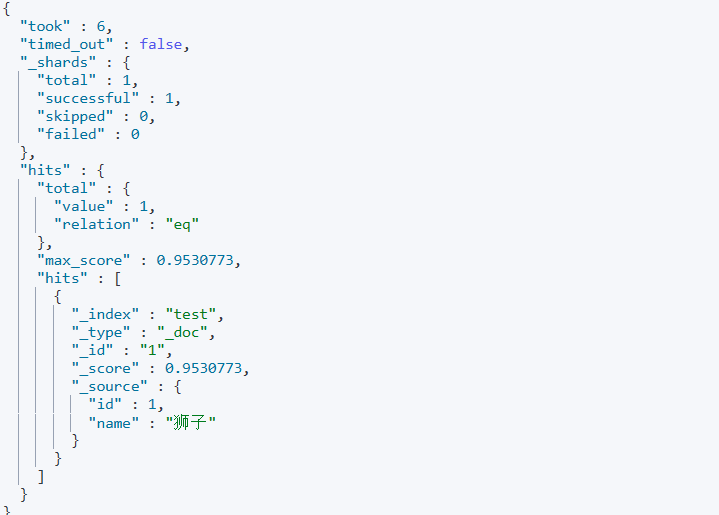

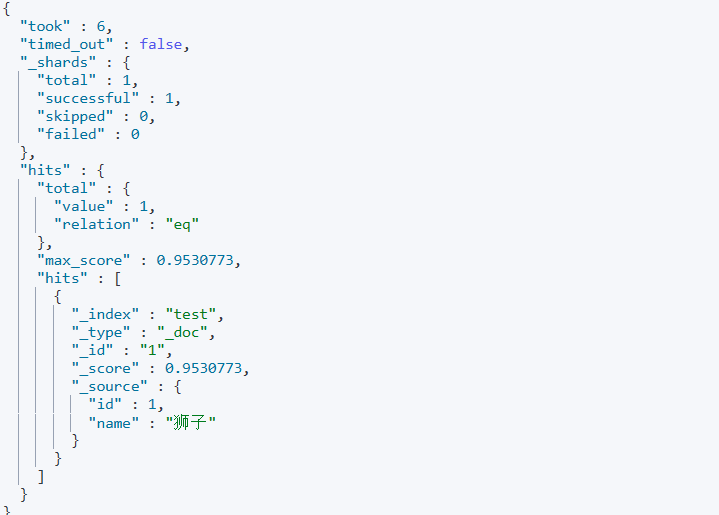

Since the search is set to split words according to the ik_smart splitter, there is only one search result for lions:

To avoid searching for homophones, use a pinyin splitter when creating the index database, and try not to use it when searching.

completion suggester

es provides completion suggester query to achieve the automatic completion function, the concept of this query is to match the user input content at the beginning of the word and return, for the type of fields in the document, to follow: participate in the complementary query field must be a complementary type; and field values are formed by more than one word array.

Take a chestnut:

The first step is to create an index library for auto-completion

# Create auto-complete index libraries

PUT test2

{

"mappings": {

"properties": {

"title":{

"type": "completion"

}

}

}

}

In the second step, insert a few complementary data into the created index library:

# Example data

POST test2/_doc

{

"title": ["Sony", "WH-1000XM3"]

}

POST test2/_doc

{

"title": ["SK-II", "PITERA"]

}

POST test2/_doc

{

"title": ["Nintendo", "switch"]

}

Step 3: Write DSL statements to implement the auto-completion function:

# Auto-complete queries

POST /test/_search

{

"suggest": {

"title_suggest": {

"text": "s", // search for data starting with the keyword s

"completion": {

"field": "title", // complementary field

"skip_duplicates": true, // skip duplicates

"size": 10 // get the first 10 results

}

}

}

}

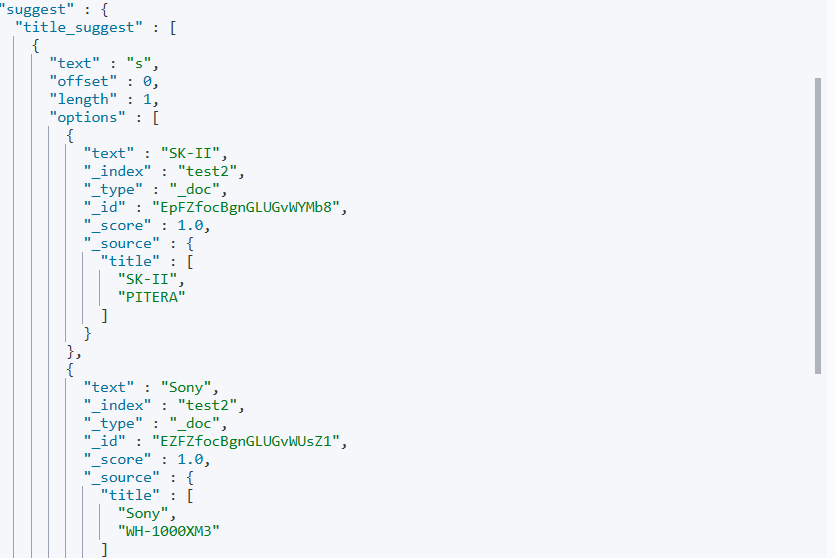

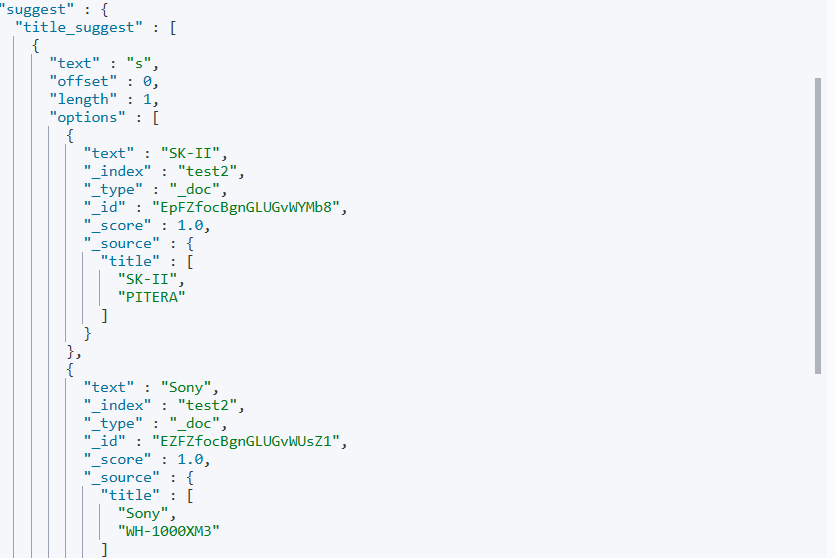

Executing the above DSL statement yields the following query results:

We can configure a customized analyzer via settings when creating the index library:

We can configure a customized analyzer via settings when creating the index library:

Since the search is set to split words according to the ik_smart splitter, there is only one search result for lions:

Since the search is set to split words according to the ik_smart splitter, there is only one search result for lions:

To avoid searching for homophones, use a pinyin splitter when creating the index database, and try not to use it when searching.

To avoid searching for homophones, use a pinyin splitter when creating the index database, and try not to use it when searching.