I. Preparing the Deep Learning Environment

My laptop system is: Windows 10

The latest version of the YOLO series, YOLOv8, has been released, for details you can refer to theThe blog I wrote earlier, currently ultralytics has released thePartial code and descriptionTheDownload YOLOv8 code on github, the code folder will contain the requirements.txt file, which describes the required installation packages.

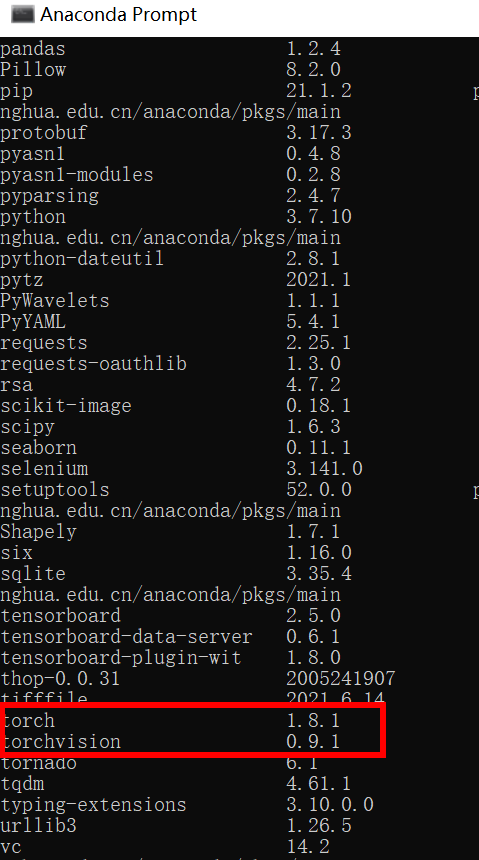

The final version of pytorch installed for this article is1.8.1The torchvision version is0.9.1The python is3.7.10, other dependent libraries can be installed according to the requirements.txt file.

Then you also need to install ultralytics, the current YOLOv8 core code is encapsulated in this dependency package, which can be installed with the following command

pip install ultralyticsII. Preparing your own dataset

When I trained YOLOv8, the data format I chose was VOC, so the following will describe how to convert your own dataset into something that can be used directly by YOLOv8.

1、Create data set

My datasets are saved in the mydata folder (the name can be customized), the directory structure is as follows, the previous labelImg labeled xml files and images into the corresponding directory

mydata

…images # Store images

…xml # Store the xml file that corresponds to the image.

…dataSet #After that four files, train.txt, val.txt, test.txt and trainval.txt, are automatically generated in the Main folder to store the names of the training set, validation set, and test set images (no suffix .jpg)

Examples are shown below:

The contents of the mydata folder are as follows:

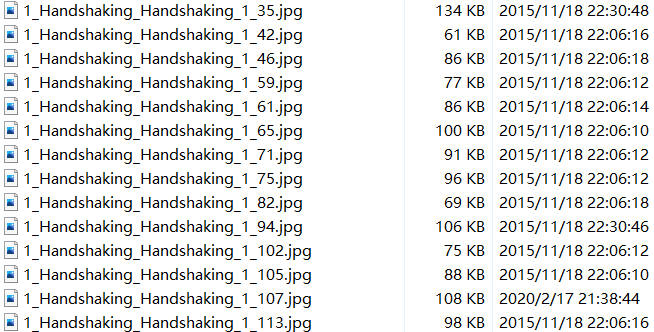

- image is JPEGImages in VOC dataset format with the following content:

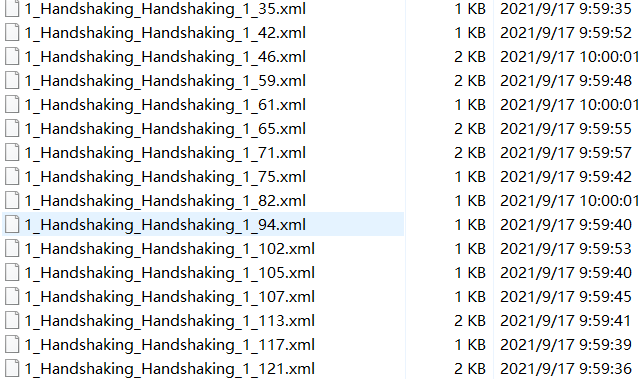

- Below the xml folder is the .xml file (labelImage is used for the labeling tool) with the following contents:

- The dataSet folder holds the training set, validation set, and test set divisions underneath it, and is generated by a script that creates asplit_train_val.pyfile with the following code:

# coding:utf-8

import os

import random

import argparse

parser = argparse.ArgumentParser()

# address of the xml file, modify it according to your own data xml is usually stored under Annotations

parser.add_argument('--xml_path', default='xml', type=str, help='input xml label path')

# Segmentation of datasets, address selection of their own data under ImageSets/Main

parser.add_argument('--txt_path', default='dataSet', type=str, help='output txt label path')

opt = parser.parse_args()

trainval_percent = 1.0

train_percent = 0.9

xmlfilepath = opt.xml_path

txtsavepath = opt.txt_path

total_xml = os.listdir(xmlfilepath)

if not os.path.exists(txtsavepath):

os.makedirs(txtsavepath)

num = len(total_xml)

list_index = range(num)

tv = int(num * trainval_percent)

tr = int(tv * train_percent)

trainval = random.sample(list_index, tv)

train = random.sample(trainval, tr)

file_trainval = open(txtsavepath + '/trainval.txt', 'w')

file_test = open(txtsavepath + '/test.txt', 'w')

file_train = open(txtsavepath + '/train.txt', 'w')

file_val = open(txtsavepath + '/val.txt', 'w')

for i in list_index:

name = total_xml[i][:-4] + '\n'

if i in trainval:

file_trainval.write(name)

if i in train:

file_train.write(name)

else:

file_val.write(name)

else:

file_test.write(name)

file_trainval.close()

file_train.close()

file_val.close()

file_test.close()- After running the code, the following four txt documents are generated in the dataSet folder:

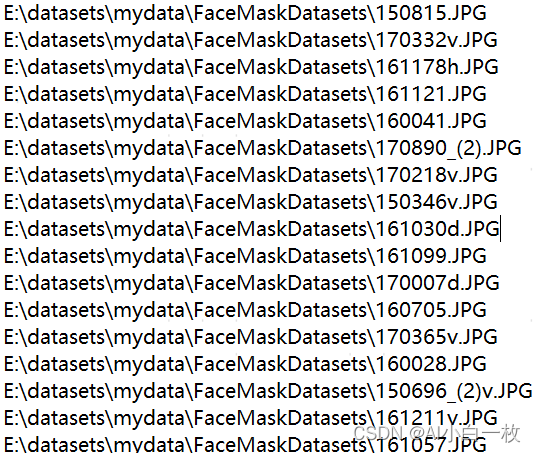

- The contents inside the three txt files are as follows:

2. Convert data format

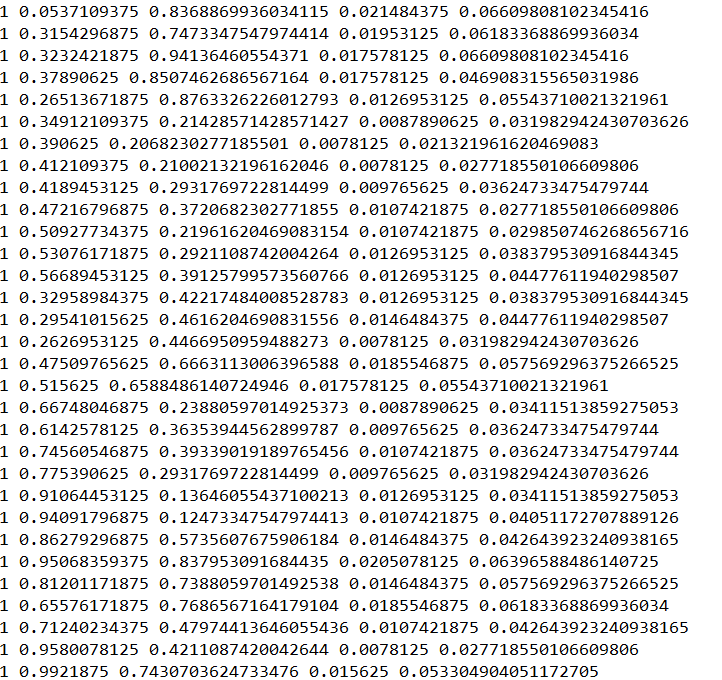

Next, prepare labels to convert the dataset format to yolo_txt format, i.e., each xml annotation extracts the bbox information in txt format, each image corresponds to a txt file, and each line of the file contains information about a target, including class, x_center, y_center, width, height format. The format is as follows:

- Create a voc_label.py file to generate the training set, validation set, and test set into theLabel labels (to be used in training), while importing the dataset path into the txt file, the code content is as follows:

# -*- coding: utf-8 -*-

import xml.etree.ElementTree as ET

import os

from os import getcwd

sets = ['train', 'val', 'test']

classes = ["a", "b"] # Change to your own categories

abs_path = os.getcwd()

print(abs_path)

def convert(size, box):

dw = 1. / (size[0])

dh = 1. / (size[1])

x = (box[0] + box[1]) / 2.0 - 1

y = (box[2] + box[3]) / 2.0 - 1

w = box[1] - box[0]

h = box[3] - box[2]

x = x * dw

w = w * dw

y = y * dh

h = h * dh

return x, y, w, h

def convert_annotation(image_id):

in_file = open('data/mydata/xml/%s.xml' % (image_id), encoding='UTF-8')

out_file = open('data/mydata/labels/%s.txt' % (image_id), 'w')

tree = ET.parse(in_file)

root = tree.getroot()

size = root.find('size')

w = int(size.find('width').text)

h = int(size.find('height').text)

for obj in root.iter('object'):

# difficult = obj.find('difficult').text

difficult = obj.find('Difficult').text

cls = obj.find('name').text

if cls not in classes or int(difficult) == 1:

continue

cls_id = classes.index(cls)

xmlbox = obj.find('bndbox')

b = (float(xmlbox.find('xmin').text), float(xmlbox.find('xmax').text), float(xmlbox.find('ymin').text),

float(xmlbox.find('ymax').text))

b1, b2, b3, b4 = b

# Labeling out-of-bounds corrections

if b2 > w:

b2 = w

if b4 > h:

b4 = h

b = (b1, b2, b3, b4)

bb = convert((w, h), b)

out_file.write(str(cls_id) + " " + " ".join([str(a) for a in bb]) + '\n')

wd = getcwd()

for image_set in sets:

if not os.path.exists('data/mydata/labels/'):

os.makedirs('data/mydata/labels/')

image_ids = open('data/mydata/dataSet/%s.txt' % (image_set)).read().strip().split()

list_file = open('paper_data/%s.txt' % (image_set), 'w')

for image_id in image_ids:

list_file.write(abs_path + '/mydata/images/%s.jpg\n' % (image_id))

convert_annotation(image_id)

list_file.close()3. Configuration file

1) Configuration of the dataset

A new mydata.yaml file (which can be custom named) is created in the mydata folder to hold the training set and validation set delineation files (train.txt and val.txt), which are generated by running the voc_label.py code, followed by the number of categories of the target and a list of the specific categories. mydata. The yaml content is as follows:

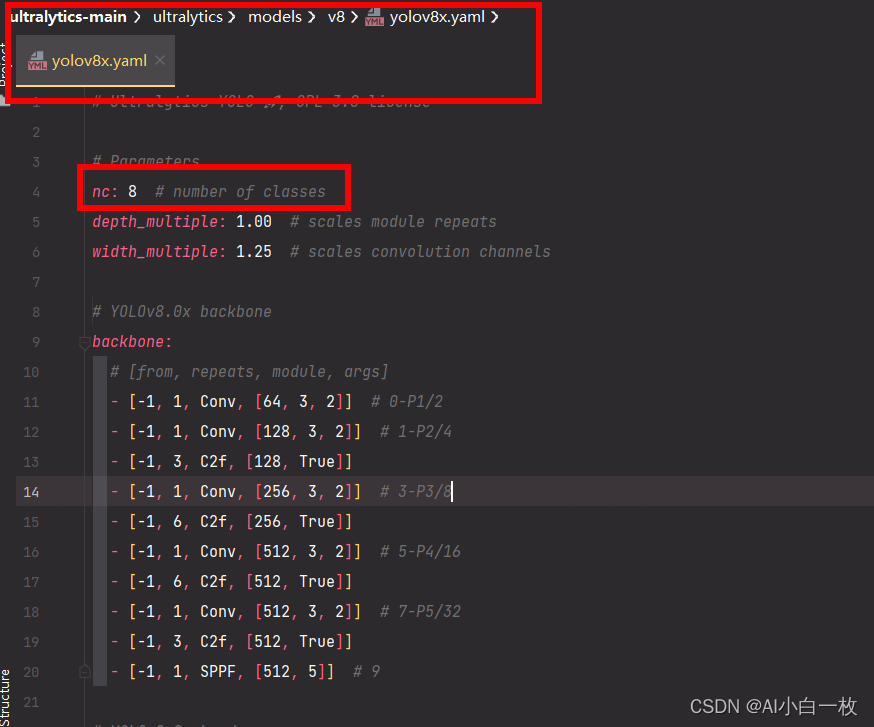

2) Select a model you need

In ultralytics/models/v8/ directory is the configuration file of the model, this side provides s, m, l, x version, gradually increasing (as the architecture increases, the training time is also gradually increasing), assuming that the use of yolov8x.yaml, only one parameter is modified to change the nc to their own number of categories, you need to take the whole (optional) as follows:

At this point, the custom dataset has been created and the next step is to train the model.

III. Model training

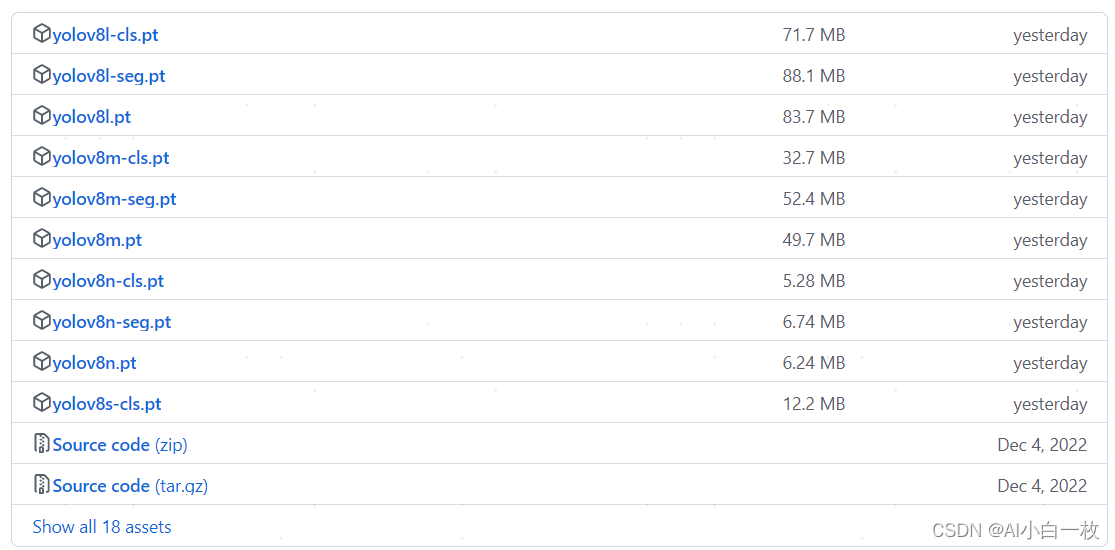

1、Download the pre-training model

Download the corresponding version of the model at the YOLOv8 GitHub open source URL

2. Training

Next you can start training the model with the following command:

yolo task=detect mode=train model=yolov8x.yaml data=mydata.yaml epochs=1000 batch=16The above parameters are explained below:

task: select the type of task, you can choose [‘detect’, ‘segment’, ‘classify’, ‘init’].

mode: select whether the task is training, validation, or prediction Racey Optional [‘train’, ‘val’, ‘predict’]

model: Select different model profiles for yolov8, yolov8s.yaml, yolov8m.yaml, yolov8l.yaml, yolov8x.yaml.

data: Select the dataset profile to generate

epochs: refers to how many times the entire dataset will be iterated during the training process, the graphics card can not you adjust the point.

batch: how many pictures at a time to see the weight update, gradient drop mini-batch, graphics card can not you adjust the small point.

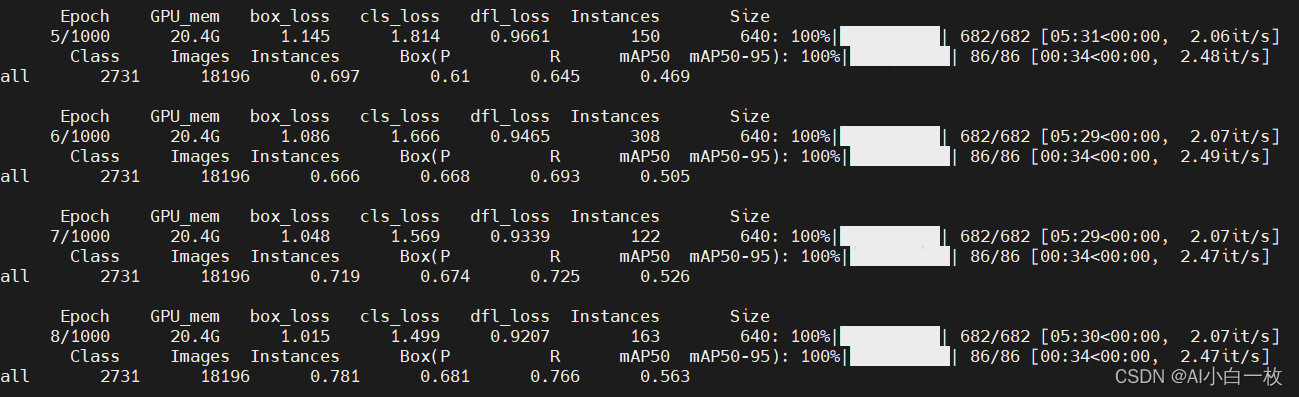

The training process is shown below