catalogs

1.1 Introduction to the basic concepts of GANs

1.2 Basic Architecture Diagram of GAN

2. The formation process of a GAN

2.1 Training the GAN: Generative and Discriminative Networks (Optimization)

An introduction to the concept of exactly how to train:

An introduction to the principles of exactly how to train (on a mathematical level):

3. Convolutional Neural Networks (ConvNets)

3.1 Convolutional Neural Networks and Traditional Multilayer Neural Networks

3.1.1 Structure of Convolutional Networks

0. Preparatory knowledge

For beginners, start to learn GAN, but do not know what GAN is, think GAN is a kind of lofty things. After learning a question about the basic idea of GAN, not very clear, but instead of complicating GAN, so here we want to introduce a simple concept of the idea of GAN first, in our brain to introduce a basic concept of GAN, can help us learn better in the future.GAN belongs to the field of artificial intelligence: through the study of a large amount of data on a certain thing, to learn to summarize the distribution of its laws at the mathematical level, to construct a reasonable mapping function, so as to solve real problems

- What is an AI system? A machine or computer that can perform certain functions like a human being.

- Why develop an AI system? Developing AI systems with specific functions to be used for humans, as some functions are not available or difficult for humans to implement (e.g., speech recognition, face recognition, Go prediction, etc.)

- How exactly does an AI system come to be developed? Learning from large amounts of data to summarize the patterns of things –Component Mapping Functions

Artificial Intelligence is essentially a mapping function for constructing data, and constructing a mapping function for data needs to go through a process of learning to generalize and summarize, so it needs to provide a model for learning, and generative adversarial networks are a model for learning

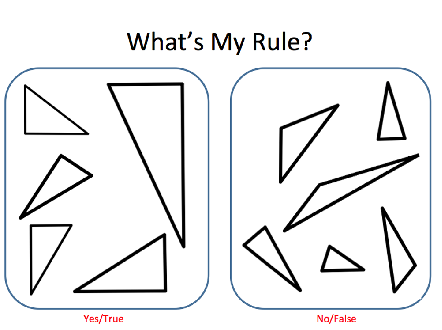

Little Chestnut: a summary of the study of the laws of right triangles 1. By studying a large number of triangles, we can find the rule: a triangle with one angle of 90° is a right triangle

2. thus constructing a right triangle mapping function: Input x1 = 60°, x2 = 90°, x3 = 30° and the output is 1. Input x1 = 70°, x2 = 60°, x3 = 50° and the output is 0;

1.Introduction to GAN

1.1 Introduction to the basic concepts of GANs

The full name of GAN is Generative adversarial network, which is translated into Chinese as Generative Adversarial Network. Generative adversarial network is actually a combination of two networks: Generator network (Generator) is responsible for generating simulated data; Discriminator network is responsible for determining whether the input data is real or generated. Generator network to constantly optimize their own generated data so that the discriminator network can not judge, the discriminator network also need to optimize themselves to make their own judgment more accurate. The relationship between the two forms a confrontation, hence the name Adversarial Network.The network here refers to neural networks, this is because GAN is designed based on the neural network model (a computational model proposed after human neural networks). For a detailed description of the neural network model, seeIntroduction to Neural NetworksandYongle Li: Convolutional Neural NetworksThe neural network model was chosen because it just happened to be the right model for our adversarial network implementation. As for why we use the neural network model and not the other models, it is because the neural network model happens to be suitable for our implementation of generative adversarial networks, and it was not intentionally chosen to be clear on this point.

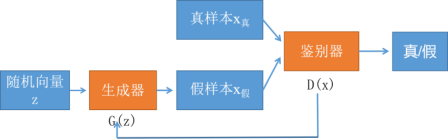

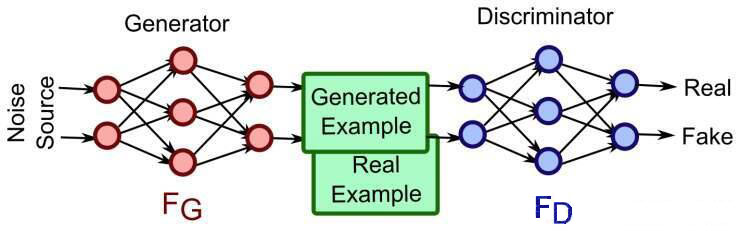

1.2 Basic Architecture Diagram of GAN

As I said above, both generative and discriminative networks are models of neural networks.

As I said above, both generative and discriminative networks are models of neural networks.

Generator: Machine-generated data (in most cases images) with the ultimate goal of “fooling” the discriminator. DiscriminatorThe goal is to determine whether the image is real or machine-generated, in order to find out what the generator did with the “fake data”.

2. The formation process of a GAN

Realistic problem requirements → building a GAN framework for realizing the functionality (programming) → training GANs (generative networks, adversarial networks) → mature GAN models → applications. This section describes how to implement the “Training GAN” and the core points of it.

2.1 Training the GAN: Generative and Discriminative Networks (Optimization)

GAN models can’t realize specific functions right away, they need to go through a training process. I call the state before and after training “original GAN model” and “mature GAN model”, the original GAN model has to go through a training process to become a mature GAN model, and this “mature GAN model” is the GAN model we actually apply. The original GAN model has to go through a training process to become a mature GAN model, and this “mature GAN model” is the GAN model we actually apply.So what exactly does this training process train? It’s training the Generator and Discriminator networks.And the training is going to involvedata setUp.

-

An introduction to the concept of exactly how to train:

-

An introduction to the principles of exactly how to train (on a mathematical level):

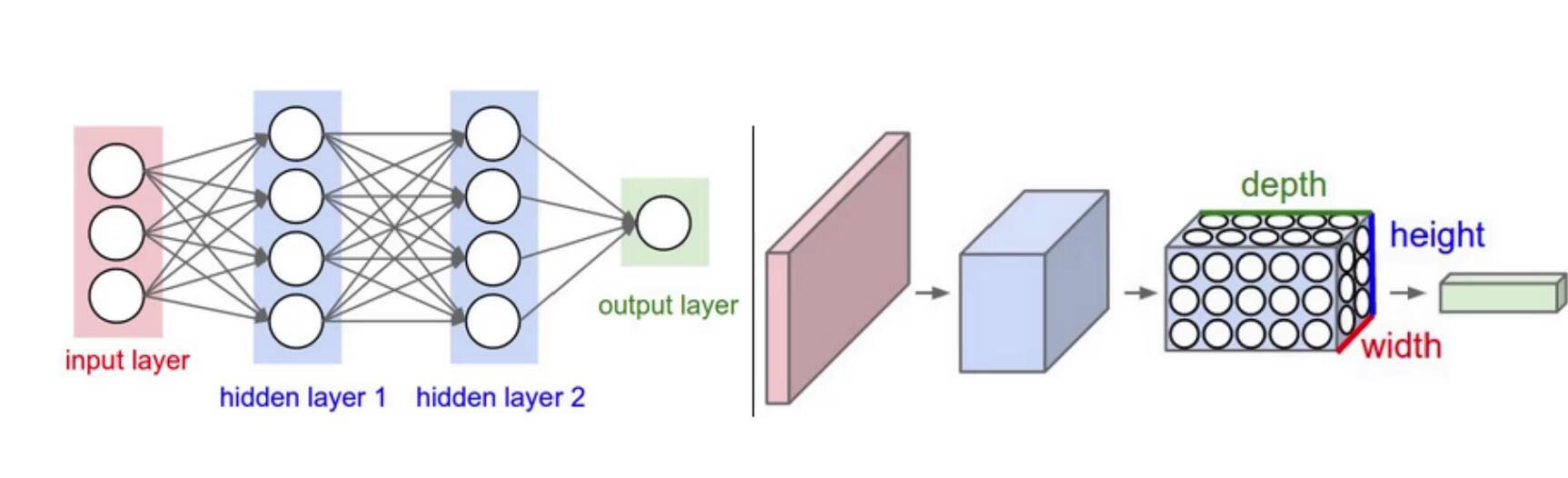

How is the optimization of specific generative and adversarial networks achieved? Moving on, this involvesTwo core issues:Neural network architecture and loss function. The neural network architecture and loss function are defined as the two basic elements that enable optimization (training).1) Neural network architecture:Previously, I said that both generative and adversarial networks use the framework of neural networks, and I also mentioned that I chose the framework of neural networks because of their suitability, so the following describes why the neural network model is suitable for the learning of the distributional laws of things (a mature GAN network is a GAN network after learning).

|

|

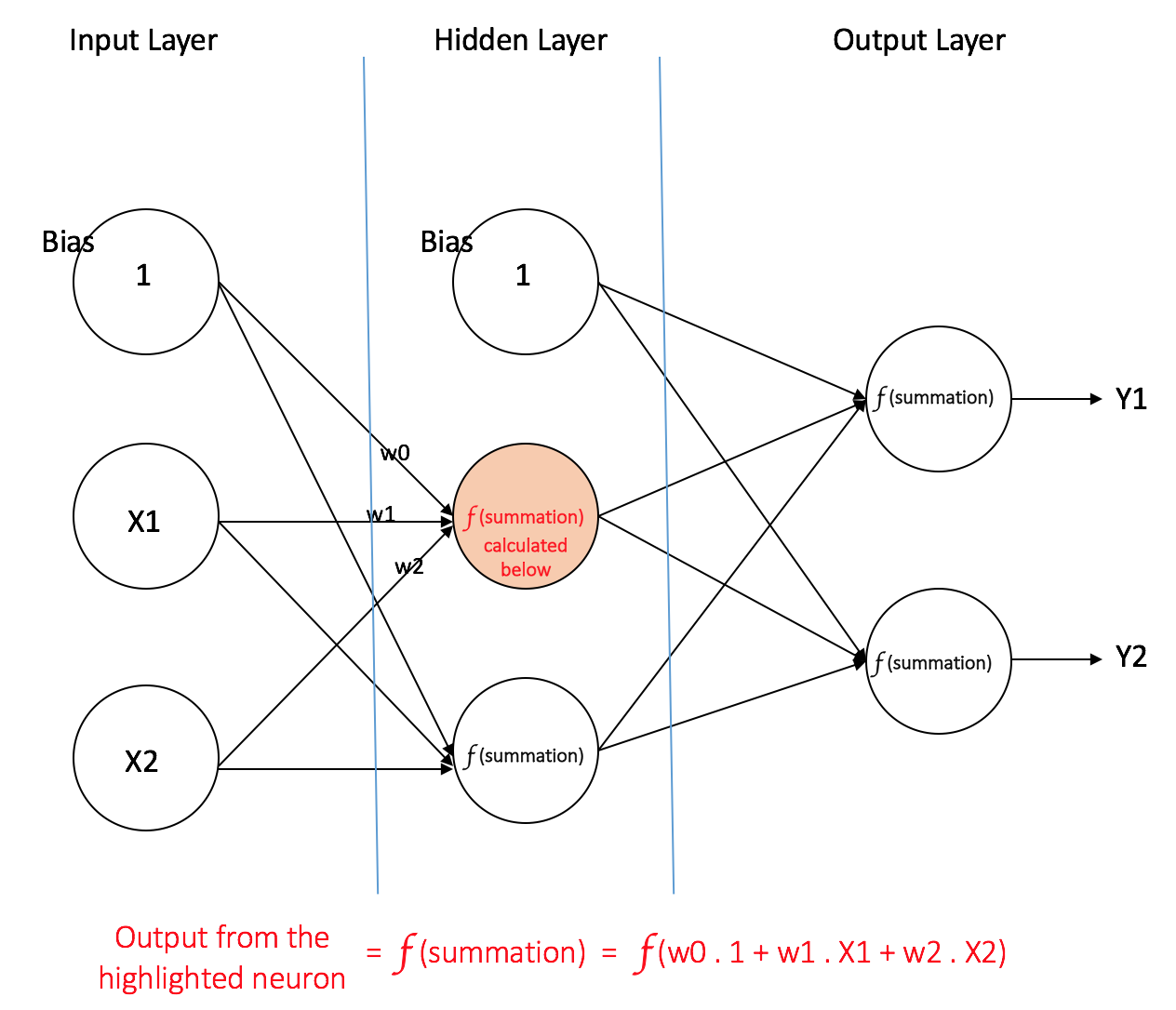

Weights on the connecting line between every two neurons in two neighboring layersInput Layer:There is only one layer, which is used to receive the features X1 , X2 …… of the data and then output them as they are to the hidden layer, the input layer does not perform any computation。

Hidden Layer: It can be one or more layers, processing the data coming from the previous layer and then outputting it to the next layer, and ultimately to the output layer.f refers to the activation function

Output Layer:The inputs are taken from the hidden layer and computations are executed, as a result of these computations the computed values Y1 , Y2 …… act as the outputs of the multilayer perceptron.

(weights between neuron j and neural halo i).The core of optimization is to optimize these weighting parameters. How to optimize it? The first thing you have to do is to introduce a loss function, (the loss function is equivalent to the error), there is an error, and based on the error in turn you can adjust the parameters.

2) Loss function (loss function)

Purpose: The loss function (loss function) is used to estimate how much the predicted value of a model does not agree with the true value (i.e., the error). For further information please see:Cross Entropy Loss Function。

Generate a loss function for the network:

(weights between neuron j and neural halo i).The core of optimization is to optimize these weighting parameters. How to optimize it? The first thing you have to do is to introduce a loss function, (the loss function is equivalent to the error), there is an error, and based on the error in turn you can adjust the parameters.

2) Loss function (loss function)

Purpose: The loss function (loss function) is used to estimate how much the predicted value of a model does not agree with the true value (i.e., the error). For further information please see:Cross Entropy Loss Function。

Generate a loss function for the network:

In the above equation, G stands for generative network, D stands for discriminative network, H stands for cross entropy, and z is the input random data.

In the above equation, G stands for generative network, D stands for discriminative network, H stands for cross entropy, and z is the input random data. is the probability of judging the generated data, where 1 means the data is absolutely true and 0 means the data is absolutely false.

is the probability of judging the generated data, where 1 means the data is absolutely true and 0 means the data is absolutely false. represents the distance of the judgment from 1. Obviously the generative network wants to achieve good results, that should be done so that the discriminator will judge the generated data as true (i.e., the smaller the distance between D(G(z)) and 1, the better).

Loss function for discriminative networks:

represents the distance of the judgment from 1. Obviously the generative network wants to achieve good results, that should be done so that the discriminator will judge the generated data as true (i.e., the smaller the distance between D(G(z)) and 1, the better).

Loss function for discriminative networks:

In the above equation, the

In the above equation, the It’s real data, and here’s the thing to keep in mind.

It’s real data, and here’s the thing to keep in mind. representing the distance of the true data from 1.

representing the distance of the true data from 1. represents the distance between generated data and 0. Obviously, the recognition network to achieve good results, then it is necessary to do, in its eyes, the real data is real data, generated data is false data (ie, real data with a small distance from 1, generated data with a small distance from 0).

represents the distance between generated data and 0. Obviously, the recognition network to achieve good results, then it is necessary to do, in its eyes, the real data is real data, generated data is false data (ie, real data with a small distance from 1, generated data with a small distance from 0).

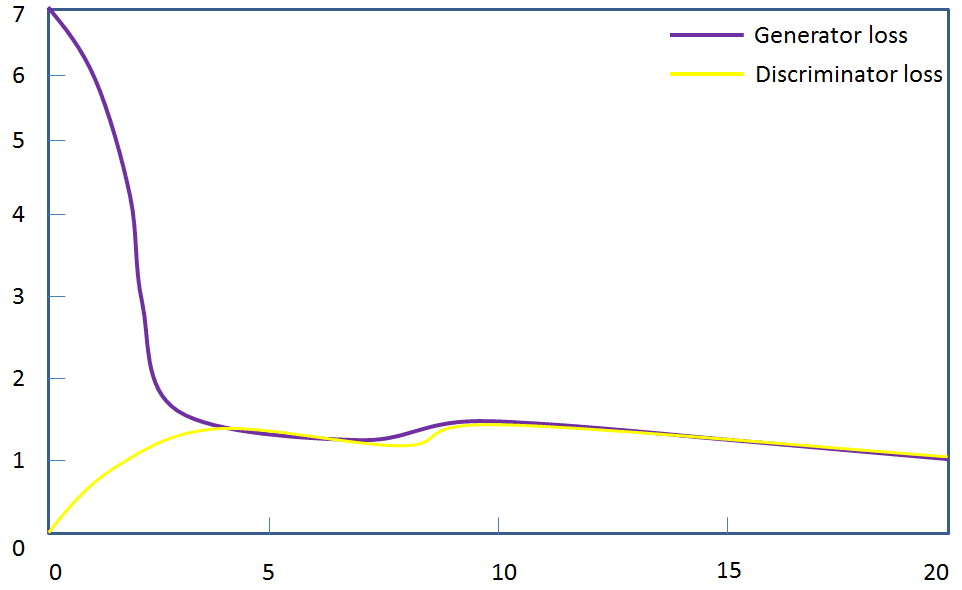

Optimization Principle:The generative and discriminative networks with loss functions can utilize error backpropagation (Backpropagation) based on their respective loss functions(BP) backpropagation algorithmand optimization methods (e.g., gradient descent) to achieve parameter tuning), and continuously improve the performance of the generative and discriminative networks (ultimately the maturity state of the generative and discriminative networks is that they have learned a reasonable mapping function).The training process for generating adversarial networks is the process of parameter optimization. For a specific presentation of optimization cases, see:Yongle Li: Machine Learning and Neural Networks

Neural network is not a narrow form of network connection, but a broad concept of neural network designed on demand with neurons as the basic unit. For example, multilayer perceptron network, convolutional neural network, etc.. Various neural network models are designed with different architectural designs, weighting parameters, activation functions, and so on.

3. Convolutional Neural Networks (ConvNets)

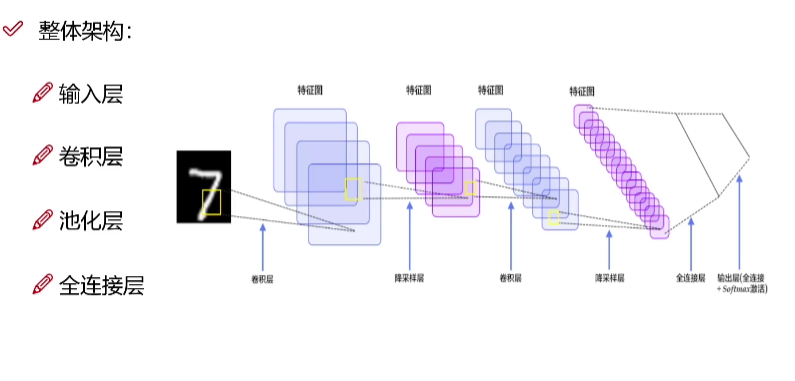

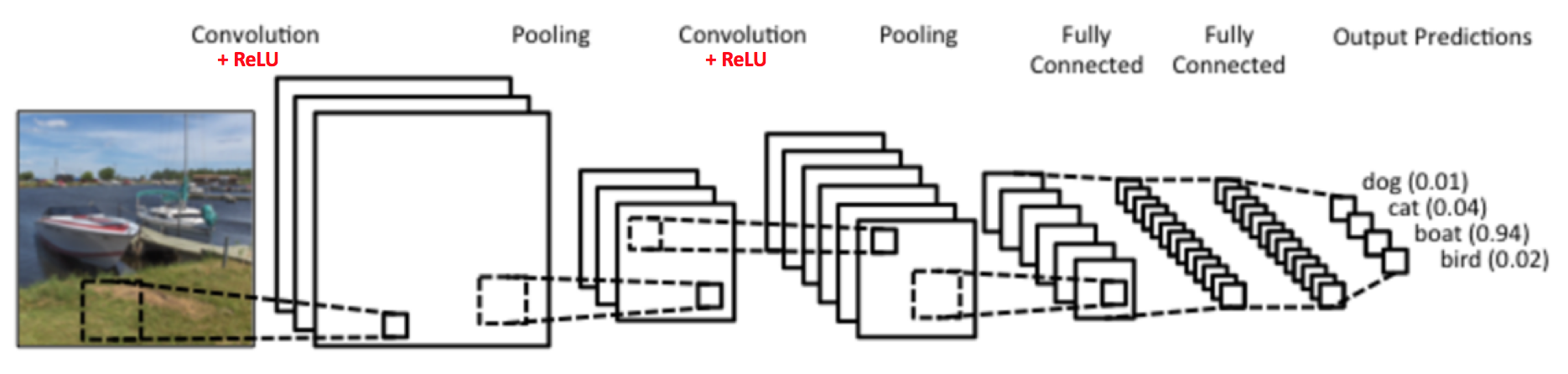

The operation of convolution is the extraction of image features, which are the data fed into the input layer of a traditional neural network, that is, it is equivalent to adding a convolutional neural network in front of a multilayer perceptron network.

3.1 Convolutional Neural Networks and Traditional Multilayer Neural Networks

3.1.1 Structure of Convolutional Networks

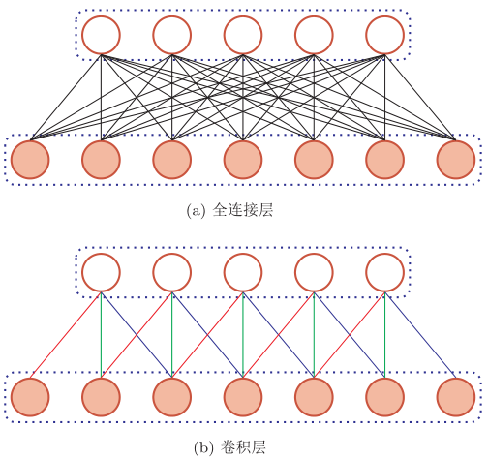

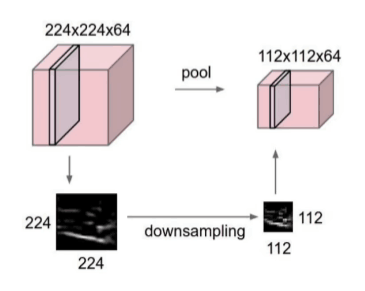

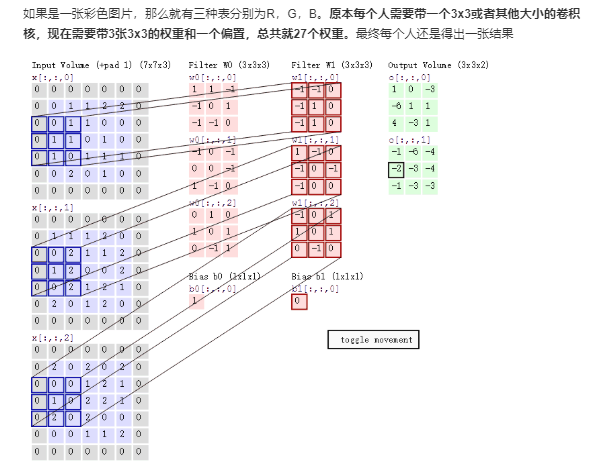

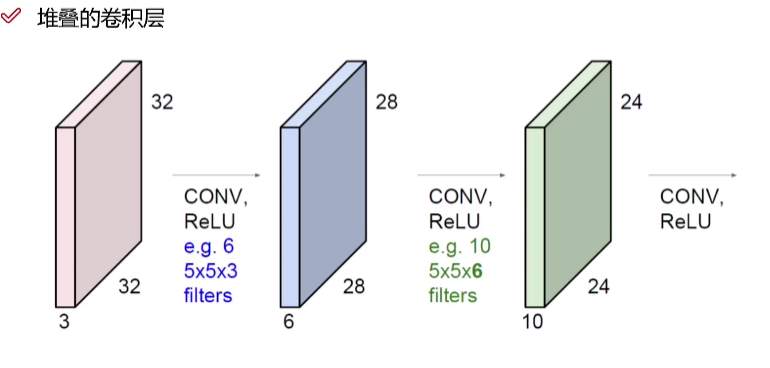

Points of contact:The basic components of neural networks (neural networks) include an input layer, a hidden layer, and an output layer. And the convolutional neural network is characterized by the hidden layer is divided into convolutional layer and pooling layer (pooling layer, also known as downsampling layer) and activation layer.Distinction 1:While most of the traditional network layers are fully connected to each other, the convolutional layers are connected to each other as follows

- Convolutional layer: extracts features by panning over the original image

- Pooling layer: reduce the complexity of the network by reducing the parameters to be learned through post-feature sparse parameters, (maximum pooling and average pooling)

- Activation layer: increasing nonlinear segmentation

- A Fully Connected Layer (FC) which is the final output layer is also added for loss computation classification if it is a classification task, but not if it is not a classification task.

Distinction 2:While most of the traditional networks are two-dimensional, convolutional neural networks have a three-dimensional dimension.

Distinction 2:While most of the traditional networks are two-dimensional, convolutional neural networks have a three-dimensional dimension.

3.1.2 Convolution step

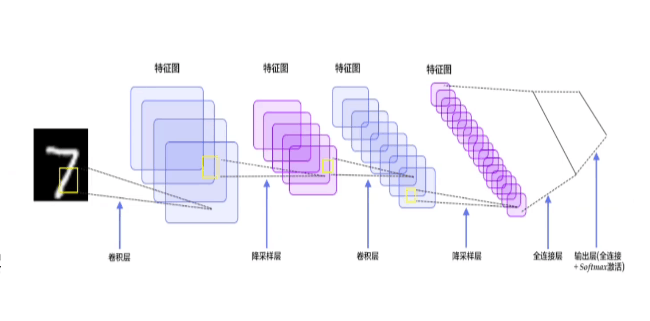

I. Introduction to convolutional operations (conceptual level) LeNet architecture (1990s)LeNet was one of the first convolutional neural networks to help advance the field of deep learning, and Yann LeCun’s pioneering work was named LeNet5 after several successful iterations since 1988. At the time, the LeNet architecture was primarily used for character recognition tasks, such as reading zip codes, numbers, and so on.

Below, we will visualize how the LeNet architecture learns to recognize images to understand how convolutional neural networks work.

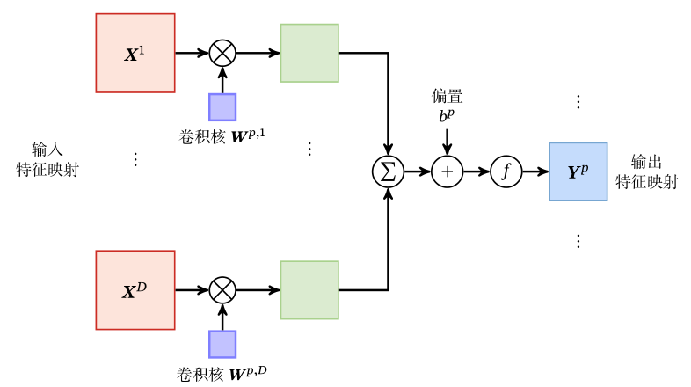

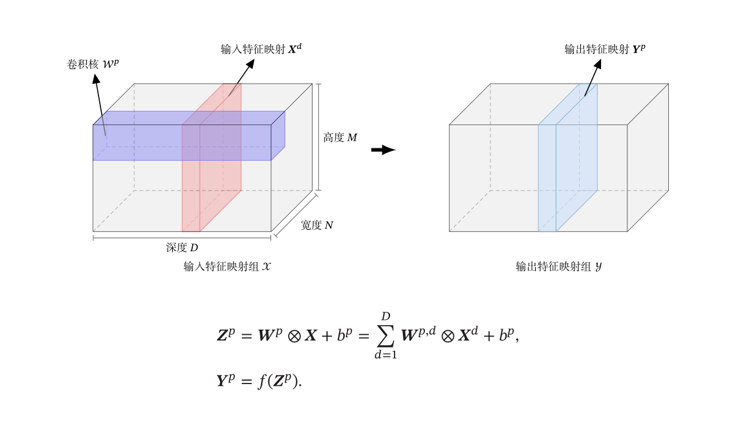

Depth D is the number of feature maps I think that rotating the feature map on the right below by 90° is the rectangle on the left

Depth D is the number of feature maps I think that rotating the feature map on the right below by 90° is the rectangle on the left

|

|

Looking at convolutional layer 1 in the figure below, the built-in convolutional kernel is 5×5×3, which is three layers of convolutional kernels and six convolutional kernels.

Looking at convolutional layer 1 in the figure below, the built-in convolutional kernel is 5×5×3, which is three layers of convolutional kernels and six convolutional kernels.

For specific examples of neural network explanations see:Yongle Li: Convolutional Neural Networks

For specific examples of neural network explanations see:Yongle Li: Convolutional Neural Networks