catalogs

Part 2 Excel data filtering and distribution statistics

Part 3 Pandas Conditional Data Filtering

1. Different dimensions of conditional data screening

2, composite conditions screening

Part 1 Introduction

In Python, the third-party library Pandas is the main tool for data cleansing, processing, and analysis. Almost all the needs based on tabular data can be realized in Pandas, which is one of the factors that make Python the dominant language in the field of data analysis. In the last technical article, we introduced the data selection function in Pandas, which lays a good foundation for us to batch process data in the future, and in this article, we will continue to introduce Pandas.Learn to handle the data filtering function which is very commonly used in tabular data.Pandas is used for data filtering in office software such as Excel / WPS. In Excel / WPS and other office software, data filtering is often used, and Pandas naturally has data filtering capabilities, but not only that, because Pandas backed by the Python language, so it has a natural advantage in character processing, and extends to the data filtering, Pandas can be with regular expressions on the data to do whatever you want, Pandas can filter data as it pleases with regular expressions, but what’s so great about it? Here we learn.This tutorial is based on pandas version 1.5.3. All Python code in this article was written in the integrated development environment Visual Studio Code (VScode) using the interactive development environment Jupyter Notebook, and the code shared in this article should be opened using VScode.Tap the link to the original article to see how to get all the demo code for this article and the data used for the demo: Python Teaching | Pandas’ fabulous conditional data filtering Presentation data is part of the information of A-share listed companies (18 fields, statistic time is May 12, 2023)

Part 2 Excel data filtering and distribution statistics

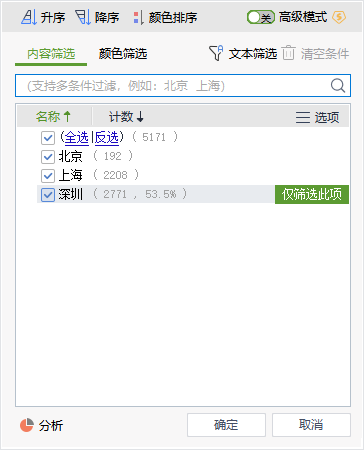

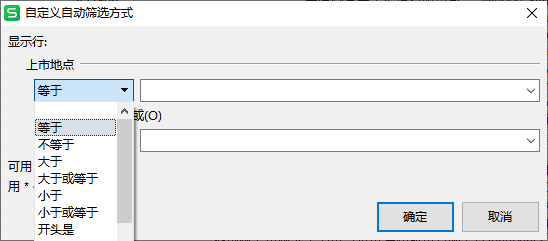

In Excel / WPS and other office software, the most basic data filtering is mainly based on the contents of the cell to WPS, for example, select a column of content, click on the upper right [filter], and then click on the field name of the lower-right button will pop-up filtering by value filtering box, as shown in the following chart.

Listing LocationCode for field value distribution.

Read and display some of the data:

# Import pandas

import pandas as pd

# Read demo data

data = pd.read_excel('. /A-share Listed Companies Partial Information (as of 2023.5.12).xlsx')

# Handle field names, do simplification

data.columns = [COL.split('\n')[0] for COL in list(data.columns)]

# Display the first two lines of data

data.head(2)

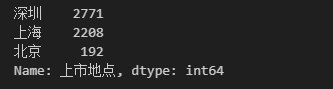

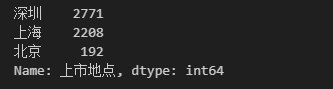

Listing LocationDistribution of values in fields

# of statistics

data['Listing Location'].value_counts()

# The results are shown in the chart below, with the largest number of A-share listed companies listed in "Shenzhen", with a value of 2771.

Listing LocationDistribution of values in the field, presented as a ratio

# Ratio statistics, add a parameter normalize=True to volume statistics

data['Listing Location'].value_counts(normalize=True)

*and?Fuzzy matching (*representing any number of characters.?(representing any single character), rather like a regular expression.

This article is a simple comparison between Excel / WPS and Pandas in terms of data filtering, the intention is not to step on one to praise the other, but to illustrate the differences between the different tools. If you can learn the practical operation of Excel / WPS here, this is of course also a good thing.

Part 3 Pandas Conditional Data Filtering

In the previous installment of the Introduction to Data Selection article, we introduced the use of thelocfunction for simple conditional filtering. In fact, in addition to thelocfunction, there are several other functions or forms in Pandas that can be used to filter data, such as thequeryfunction, the slice ([]), etc. Among these functions, the author believes that the best way to generalize is to use slices ([]) to do data filtering. The form of slicing and filtering is as follows.

Variable name [<conditional expression>]dataAs an example, the screening conditions from different dimensions are introduced.

1. Different dimensions of conditional data screening

(1) Comparing data values

One of the simplest, yet most common data filtering conditions is by determining whether the value of a field is equal to, or not equal to, a specified value. For example when we want toScreening data of A-share listed companies with listing in Beijing, you can use the following filtering code.# Filter data of A-share listed companies with listing in Beijing

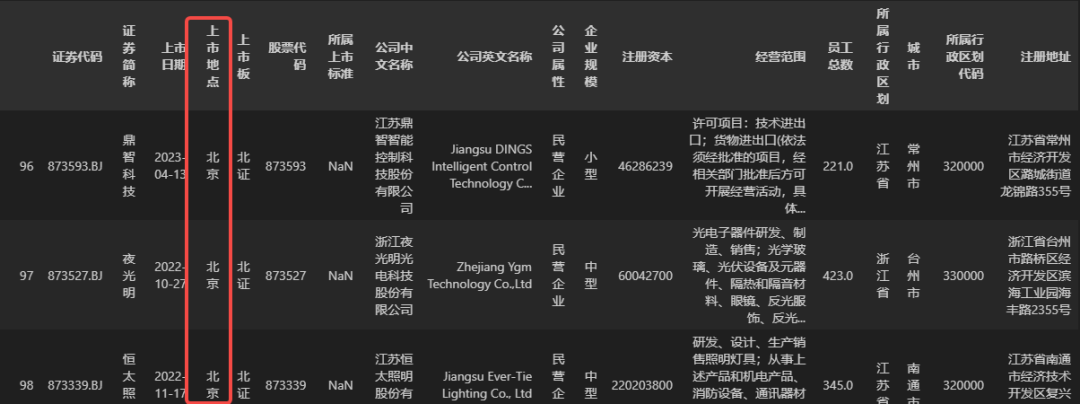

data[data['Listing Location'] == 'Beijing']data['Listing Location'] == 'Beijing'It is the condition of filtering, and the filtering result (part) is shown below.

dataIf a company is not listed in Beijing, then the filtering condition is that the listing location is not equal to “Beijing”, and the code is written by adding the equal sign to the above code.==Change to not equal sign!=This is sufficient, and will not be demonstrated specifically here.

When there are more situations, such asshortlistmunicipalitiesFields are data from one of Beijing, Shanghai, Guangzhou, or Shenzhen.There is more than one method that can be used, and the following is the code for one of them.

# Filter out data where the city field is one of Beijing, Shanghai, Guangzhou, or Shenzhen.

data[data['city'].isin(['Beijing', 'Shanghai', 'Guangzhou', 'Shenzhen'])]

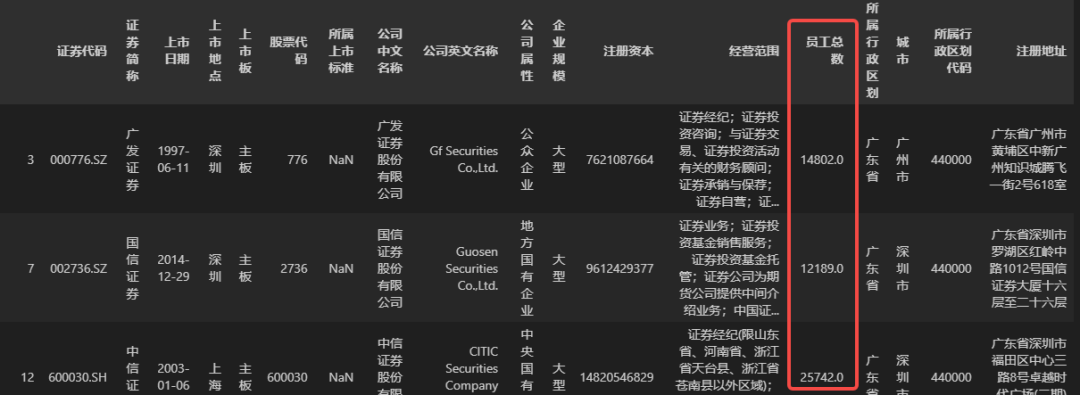

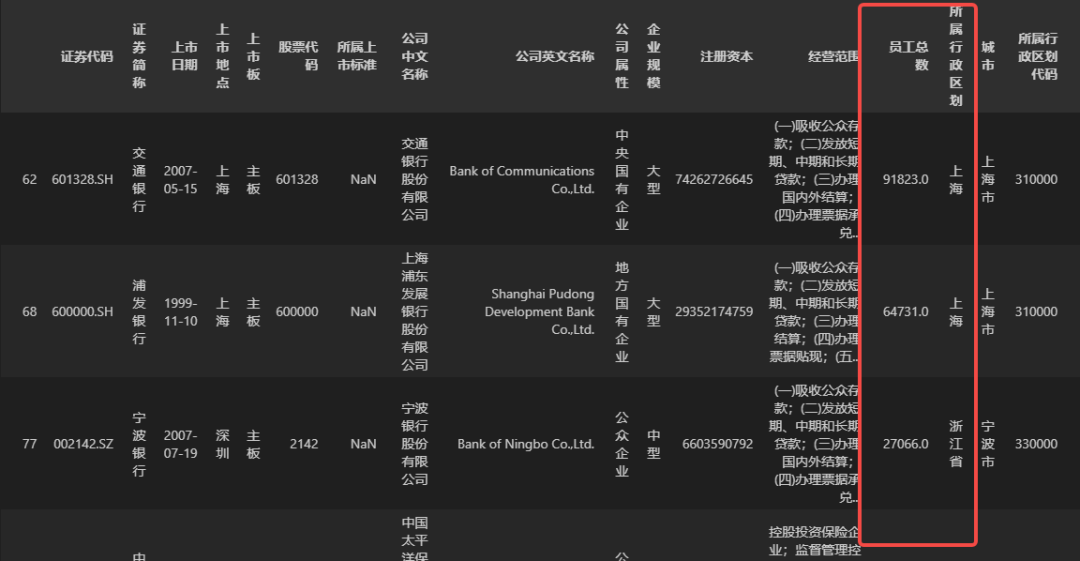

# Filter companies with 10,000 or more employees

data[data['total employees'] >= 10000]

Total number of employeesgreater than or equal toCode of the administrative division to which it belongsdata (of course this condition doesn’t make any practical sense, it’s just an example here), you can use the following code.

# Start by converting the Affiliated Administrative Code field type to numeric

data['Administrative division code'] = data['Administrative division code'].asype(int)

# Filter out data where the total number of employees is greater than or equal to the administrative division code to which they belong

data[data['Total number of employees'] >= data['Administrative division code']]

(2) Whether the value is null

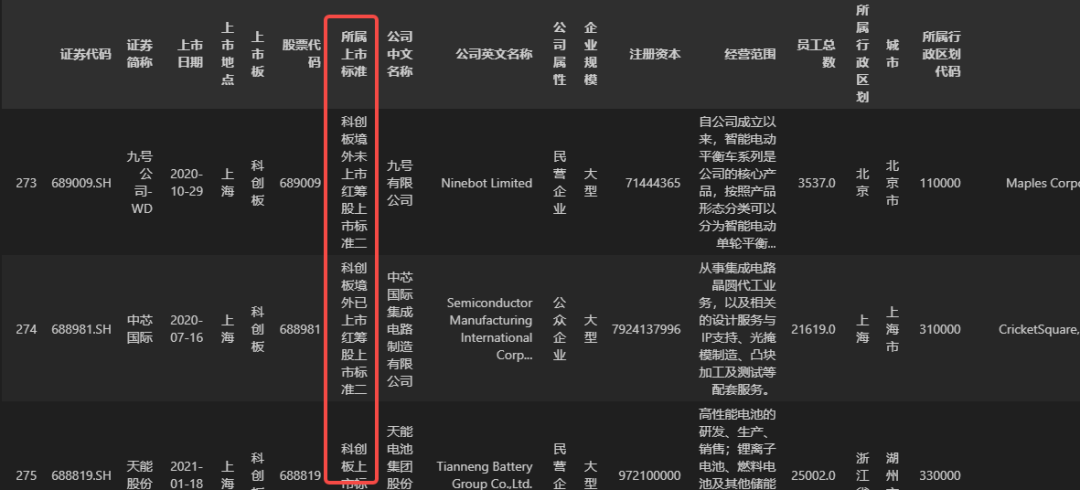

There are times when we need to do filtering based on whether the value of a field is null or not, for exampleFiltering datadataIn theListing criteriaData with non-null field values, you can use the following code.

# Filter data with non-null values in the belonging to listing criteria field

data[data['Listing criteria'].notna()]

The default null value in Python is None, and in Pandas, the default null (or missing) value in tabular data isNaNIt is a constant in the numpy library, meaning Not a Number, and since Pandas was developed based on the numpy library, this setting is preserved in the table data by setting the null value to NaN (numpy.nan). Theisna()andnotna()The null values recognized by the function also include None and NaN, but not the null character ”, which is the empty character''Not a null value in pandas.

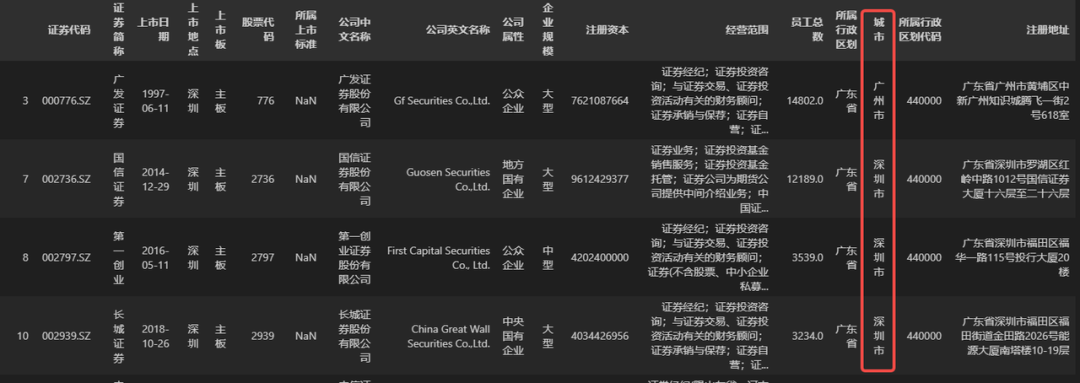

(3) Text Screening

As mentioned in the introduction to Excel / WPS, this type of office software can do text filtering on a field, filtering the field contains, does not contain, the beginning of the (not) is, the end of the (not) is a keyword data, in addition to the use of some wildcard characters to do fuzzy filtering. This kind of filtering operations in Excel has been very powerful, but these have a regular expression supported by the face of Pandas, are considered a piece of cake. Here we introduce Pandas how to filter data based on the text content of the field. First, the simplest condition is whether the field contains (or does not contain) a keyword, for exampleFiltering datadataIn theCompany Name in ChineseEnterprises with the keyword “securities” in the field, you can use the following data filtering code.

# Filter the data for companies with the keyword "securities" in the Chinese name field.

data[data['Company Name in Chinese'].str.contains('Securities')]

# 41 eligible data after screening

Company Name in ChineseFor data that does not contain the keyword “securities”, a wave character can be added to the top of the conditional expression in the above code.~, the code is as follows (no longer show the filtering results).

# Filter the data for enterprises whose Chinese company name field does not contain the keyword "securities".

data[~data['Company Chinese Name'].str.contains('Securities')]containsfunction, just replace the keyword. For examplescreeningCompany Name in ChinesewordData containing “Securities”, “Banks” or “Insurance” in the fieldWhen you do, you can use a regular expression to process it, with the following code.

Regular expression tutorials have been updated, please go to the end of the article to find the relevant links

# Filter data with "Securities", "Banking" or "Insurance" in the Company Name field.

data[data['Company Chinese Name'].str.contains('Securities|Banking|Insurance')]

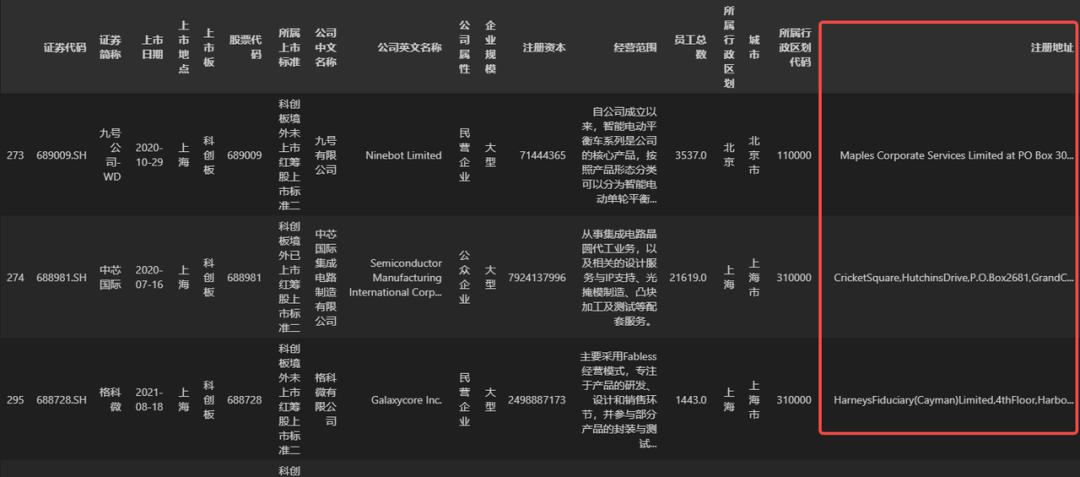

registered addressData with no Chinese in the fieldThis can be done using the code below.

# Filter data that does not contain Chinese characters in the Registration Address field

data[~data['registered address'].str.contains('[\u4e00-\u9fa5]')]

According to the text to do filtering, there is a need to pay special attention to, that is, to filter the field, must be all the values are character types, can not be empty values (if contains empty values, you can fill in the missing value for the character type), can not contain the number type and date type, because regular expressions can not be done on these types of processing.

(4) Data value length

Sometimes the length of the text in a field can also reflect some information, for example, the more characters in the registered address, the more detailed the description of the address, and Pandas can be filtered according to the length of the characters, for example, when the need for thescreeningregistered addressAll data in a field with a character count less than or equal to 10The following code can be used when

# Filter all data in the Registered Address field with a character count less than or equal to 10.

data[data['registered address'].str.len() < 10]

(5) Date Filtering

In addition to filtering on numeric values and characters, Pandas can also filter data by date, as Excel does, for exampleScreening for new A-share companies to be listed in 2023, then you can use the following code.# Start by converting the listed date field to a date type in python

data['Listing Date'] = data['Listing Date'].asype('datetime64[ns]')

# Screening for new A-share companies to be listed in 2023

data[data['Listing Date'] >= pd.Timestamp(2023, 1, 1)]

(6) Other

In addition to the above data filtering dimensions, Pandas data filtering can also have other dimensions, such as according to the index value, field name filtering, but the practicality of these points is not high, this will not be too much introduction.2, composite conditions screening

You may have noticed that the data filtering described above has only one filter condition in all the filtering cases, although the dimensions are different. In fact, Pandas data filtering can have more than one condition (two or more). When there are more than one condition, it is best to enclose each condition in parentheses, and if two of the conditions are “or” relationships, then use the logical or notation|to connect them; if the relationship between two conditions is “and”, then the logical and notation should be used&to connect them. In Pandas, all the conditional forms described above can be combined in any permutation. Here are a few examples of how to do compound condition filtering.

[Example 1] Filter out the data of A-share listed companies whose administrative district is located in “Jiangsu, Zhejiang and Shanghai” and whose total number of employees is greater than or equal to 20,000.

## [Example 1] Filter the data of A-share listed companies whose administrative district is located in "Jiangsu, Zhejiang and Shanghai" and whose total number of employees is greater than or equal to 20,000.

# A few unnecessary spaces have been added to the code for ease of viewing

data[ (data['Administrative divisions'].isin(['Shanghai', 'Jiangsu', 'Zhejiang'])) & (data['Total employees'] >= 20000)]

Enterprise sizeis large, orregistered capitalGreater than or equal to 1 billion enterprise data.

## [Example 2] Filter the data of enterprises whose business size is large, or whose registered capital is greater than or equal to 1 billion dollars.

data[(data['Enterprise Size'] == 'Large') | (data['Registered Capital'] > 1000000000)]